Automatic differentiation#

Refs:

https://alexey.radul.name/ideas/2013/introduction-to-automatic-differentiation/

http://autodiff.org/

https://www.kaggle.com/code/borisettinger/gentle-introduction-to-automatic-differentiation

https://en.wikipedia.org/wiki/Automatic_differentiation?useskin=vector

https://towardsdatascience.com/pytorch-autograd-understanding-the-heart-of-pytorchs-magic-2686cd94ec95

https://d2l.ai/chapter_preliminaries/autograd.html

https://arxiv.org/abs/1502.05767

https://www.youtube.com/watch?v=wG_nF1awSSY&t=0s

https://www.youtube.com/watch?v=ZGSUrfJcXmA

https://www.youtube.com/watch?v=YQ7RIHMWA88

How to differentiate in the computer?

Exact: Not always available. Imagine a neural network with millions of parameters, you cannot just do it manually.

Symbolic (sympy, mathematica, …): Expression tree explossion (see, for instance, the multiplication rule). Derivative of data struct?

Finite differences: Stability and precision. O(n) evaluations for n-dimensional gradient.

Applications of Automatic Differentiation#

Optimization (multi-dimensional) based on gradient computations (neural networks)

Sensitivity analysis (\(\partial(result)/\partial(input)\))

Physical modeling (computing forces as derivatives of potentials, computing equations of motion from Lagrange or Hamiltonian equations)

Probabilistic inference (Hamiltonian Monte Carlo)

Machine learning

…

Forward-mode (directional derivatives): Dual numbers#

Based in infinitesimal calculus: \(\epsilon^2 = 0\). So a Taylor expression for an approximation is

The pair \((x, f'(x))\) is called a dual pair. And there are operating rules such us

By extending the basic operations to this dual numbers, we can compute the derivative automatically by evaluating the epsilon coefficient of \(dual-version(f(x+epsilon 1))\)

The following is a simple implementation (https://www.kaggle.com/code/borisettinger/gentle-introduction-to-automatic-differentiation)

class DualBasic(object):

def __init__(self, val, eps):

self.val = val

self.eps = eps

Python magics for some basic ops

class DualBasicEnhanced(object):

def __init__(self, *args):

if len(args) == 2:

value, eps = args

elif len(args) == 1:

if isinstance(args[0], (float, int)):

value, eps = args[0], 0

else:

value, eps = args[0].value, args[0].eps

self.value = value

self.eps = eps

def __abs__(self):

return abs(self.value)

def __str__(self):

return "Dual({}, {})".format(self.value, self.eps)

def __repr__(self):

return str(self)

class DualArith(object):

def __add__(self, other):

other = Dual(other)

return Dual(self.value + other.value, self.eps + other.eps)

def __sub__(self, other):

other = Dual(other)

return Dual(self.value - other.value, self.eps - other.eps)

def __mul__(self, other):

other = Dual(other)

return Dual(self.value * other.value, self.eps * other.value + self.value * other.eps)

And also include division: $\( \frac{1}{x+ae} = \frac{1}{x} - \epsilon \frac{a}{x^2}, \)$ so

class DualDiv(object):

def __truediv__(self, other):

other = Dual(other)

if abs(other.value) == 0:

raise ZeroDivisionError

else:

return Dual(self.value / other.value,

self.eps / other.value - self.value / (other.value)**2 * other.eps)

Now this is the dual class

class Dual(DualBasicEnhanced, DualArith, DualDiv):

pass

This is now an example for the automatic derivative of \(x^2\)

def square(x):

return x*x

If we want to compute the derivative at \(x=3\), we use

square(Dual(3.1,1))

Dual(9.610000000000001, 6.2)

As you can see, in the primal part we have \(3^2 = 9\), and on the dual part we have the derivative evaluated at \(x=3\).

Now let’s use another function,

def cube(x):

return x*x*x

cube(Dual(2,1))

Dual(8, 12)

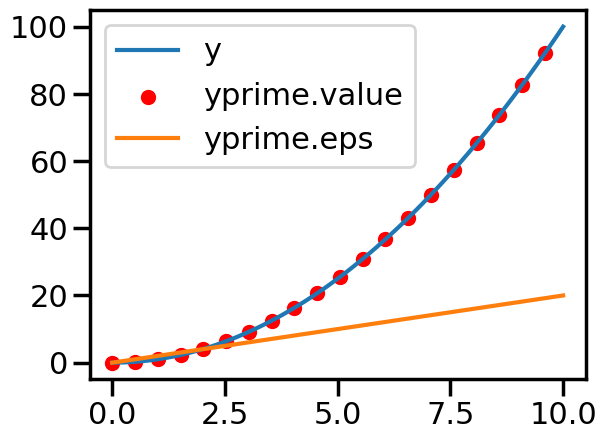

Let’s plot

import matplotlib.pyplot as plt

import seaborn as sns

import numpy as np

sns.set_context("poster")

# Data

x = np.linspace(0.0, 10.0, 100)

y = square(x)

yprime = square(Dual(x, 1))

# Plot

sns.lineplot(x=x, y=y, label="y")

sns.scatterplot(x=x[::5], y=yprime.value[::5], marker="o", label="yprime.value", color="red")

sns.lineplot(x=x, y=yprime.eps, label="yprime.eps")

<Axes: >

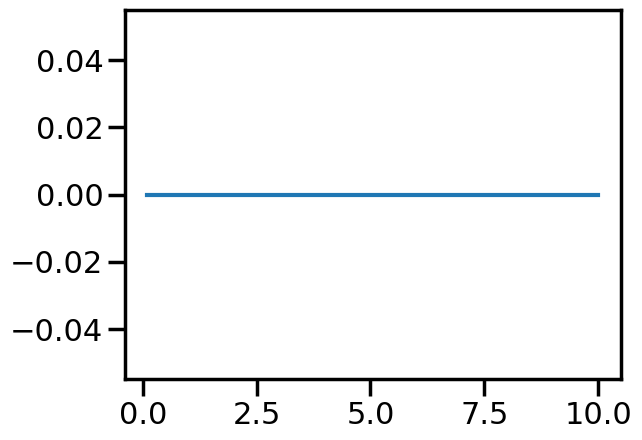

What about the error?

print(np.abs(1-yprime.eps/(2*x)))

sns.lineplot(x=x, y=np.abs(1-yprime.eps/(2*x)))

[nan 0. 0. 0. 0. 0. 0. 0. 0. 0. 0. 0. 0. 0. 0. 0. 0. 0.

0. 0. 0. 0. 0. 0. 0. 0. 0. 0. 0. 0. 0. 0. 0. 0. 0. 0.

0. 0. 0. 0. 0. 0. 0. 0. 0. 0. 0. 0. 0. 0. 0. 0. 0. 0.

0. 0. 0. 0. 0. 0. 0. 0. 0. 0. 0. 0. 0. 0. 0. 0. 0. 0.

0. 0. 0. 0. 0. 0. 0. 0. 0. 0. 0. 0. 0. 0. 0. 0. 0. 0.

0. 0. 0. 0. 0. 0. 0. 0. 0. 0.]

/tmp/ipykernel_2293/4071155334.py:1: RuntimeWarning: invalid value encountered in divide

print(np.abs(1-yprime.eps/(2*x)))

/tmp/ipykernel_2293/4071155334.py:2: RuntimeWarning: invalid value encountered in divide

sns.lineplot(x=x, y=np.abs(1-yprime.eps/(2*x)))

<Axes: >

More general implementation#

What about other functions?

def myexp(x):

return np.exp(x)

myexp(Dual(3,1))

---------------------------------------------------------------------------

AttributeError Traceback (most recent call last)

AttributeError: 'Dual' object has no attribute 'exp'

The above exception was the direct cause of the following exception:

TypeError Traceback (most recent call last)

Cell In[11], line 3

1 def myexp(x):

2 return np.exp(x)

----> 3 myexp(Dual(3,1))

Cell In[11], line 2, in myexp(x)

1 def myexp(x):

----> 2 return np.exp(x)

TypeError: loop of ufunc does not support argument 0 of type Dual which has no callable exp method

We need to use specialized libraries that have already and correctly implemented automatic differentiation, like

pytorch autograd: https://pytorch.org/tutorials/beginner/blitz/autograd_tutorial.html

Tensorflow autodiff: https://www.tensorflow.org/guide/autodiff

numpy autograd: https://github.com/HIPS/autograd

…

Using pytorch#

https://pytorch.org/tutorials/intermediate/forward_ad_usage.html

https://towardsdatascience.com/getting-started-with-pytorch-part-1-understanding-how-automatic-differentiation-works-5008282073ec

!pip install torch

#!conda install pytorch

# Example from https://sebarnold.net/tutorials/beginner/blitz/autograd_tutorial.html

import torch

from torch.autograd import Variable

x = Variable(torch.ones(2, 2), requires_grad=True)

print(x)

y = x + 2

print(y)

print(y.grad_fn)

z = y * y * 3

out = z.mean()

print(z, out)

out.backward()

print(x.grad)

Original example#

import torch

import matplotlib.pyplot as plt

import seaborn as sns

sns.set_context('poster')

x = torch.linspace(0.0, 1.0, 100, requires_grad=True)

y = torch.exp(2*x)

# Compute the gradient using torch.autograd.grad

grads = torch.autograd.grad(y, x, torch.ones_like(y), create_graph=True)[0]

# Convert torch tensors to NumPy arrays for plotting

x_values = x.detach().numpy()

y_values = y.detach().numpy()

dy_dx_values = grads.detach().numpy()

# Plot y and dy_dx

sns.lineplot(x=x_values, y = y_values, label=rf'$\exp(2x)$')

sns.lineplot(x=x_values, y = dy_dx_values, label=rf'$dy/dx$')

Using tensorflow#

https://www.tensorflow.org/guide/autodiff

!pip3 install tensorflow

#!conda install tensorflow

import numpy as np

import matplotlib.pyplot as plt

import tensorflow as tf

x = tf.Variable(3.0)

print(x)

with tf.GradientTape() as tape:

y = x**2

# dy = 2x * dx

dy_dx = tape.gradient(y, x)

dy_dx.numpy()

import tensorflow as tf

x = tf.linspace(0.0, 10.0, 100)

with tf.GradientTape() as tape:

tape.watch(x) # You need to watch the variable x explicitly.

y = x**2

#print(y)

dy_dx = tape.gradient(y, x)

print(dy_dx)

# Convert TensorFlow tensors to NumPy arrays for plotting

x_values = x.numpy()

y_values = y.numpy()

dy_dx_values = dy_dx.numpy()

import seaborn as sns

sns.set_context('poster')

# Plot y and dy_dx

sns.lineplot(x=x_values, y=y_values, label=rf'$y = x^2$')

sns.lineplot(x=x_values, y=dy_dx_values, label=rf'$dy/dx$')

Exponential#

import tensorflow as tf

x = tf.linspace(0.0, 1.23, 100)

with tf.GradientTape() as tape:

tape.watch(x) # You need to watch the variable x explicitly.

y = tf.exp(2*x)

#print(y)

dy_dx = tape.gradient(y, x)

print(dy_dx)

# Convert TensorFlow tensors to NumPy arrays for plotting

x_values = x.numpy()

y_values = y.numpy()

dy_dx_values = dy_dx.numpy()

import seaborn as sns

sns.set_context('poster')

# Plot y and dy_dx

sns.lineplot(x=x_values, y=y_values, label=rf'$y = exp(2x)$')

sns.lineplot(x=x_values, y=dy_dx_values, label=rf'$dy/dx$')

Implementation using autograd#

https://github.com/HIPS/autograd You will do it